As the global artificial intelligence (AI) race accelerates, widespread adoption is driving a parallel increase in exposure to ethical failures, regulatory non-compliance, data misuse, and accountability gaps. AI has moved beyond isolated innovation teams into core business processes, influencing decisions with legal, financial, and societal consequences. However, what has not kept pace is AI governance. Most organisations can describe what their AI systems do, but few can demonstrate consistent ownership, risk assessment, and ongoing supervision. This governance gap is the root cause of most AI-related failures.

ISO/IEC 42001:2023 was introduced to close that gap. It establishes a formal Artificial Intelligence Management System (AIMS), applying the same disciplined management approach used in information security, quality, and privacy standards.

This article explains ISO/IEC 42001:2023, covering its clauses, annexures, certification requirements, and practical implementation for senior leaders, highlighting how organisations can establish effective AI governance. Subsequent blogs in this series will explore how risks arise across the AI lifecycle and discuss practical approaches to operationalising controls, while also addressing common implementation gaps and strategies to overcome them.

What ISO/IEC 42001:2023 Is and Isn’t

ISO/IEC 42001:2023 is the world’s first international certifiable management system standard for an Artificial Intelligence Management System (AIMS). It specifies requirements for establishing, implementing, maintaining, and

continually improving AI governance in organisations.

As a management system standard, ISO/IEC 42001 addresses organisational governance rather than technical implementation. It requires organisations to define how AI use cases are approved, how risks are identified and treated, how responsibilities are assigned, how changes are controlled, and how oversight is maintained over time.

The standard does not regulate algorithms, certify individual AI models, prescribe technical tools or guarantee fair, bias-free, or optimal outcomes. Responsibility for lawful, ethical, and context-appropriate AI remains with the organisation.

The Three Core Pillars of Trustworthy AI

While many AI governance programmes start with controls and audits, trust is built on outcomes users experience. ISO/IEC 42001 provides a framework that organisations can use to embed the three core pillars of trustworthy AI.

Transparency ensures organisations can clearly identify where AI is used, for what purpose, and with what limitations.

Accountability ensures AI outcomes are owned by people, not systems. Clear roles, escalation paths, and human oversight prevent responsibility from being obscured by automation.

Fairness focuses on preventing systematic harm at scale, requiring organisations to assess, monitor, and correct biased outcomes throughout the AI lifecycle.

Together, these pillars translate ethical expectations into governance practices that scale across teams, systems, and jurisdictions, aligning trust, compliance, and business adoption.

Applicability, Scope, and Intended Use

ISO/IEC 42001 applies to any organisation that develops, deploys, or uses AI systems, regardless of size, sector, or technical maturity. This includes organisations procuring AI solutions from third parties and relying on them in operational or decision-making processes.

The scope and depth of governance are determined by the risk and potential impact of AI applications, not organisational size or technical maturity.

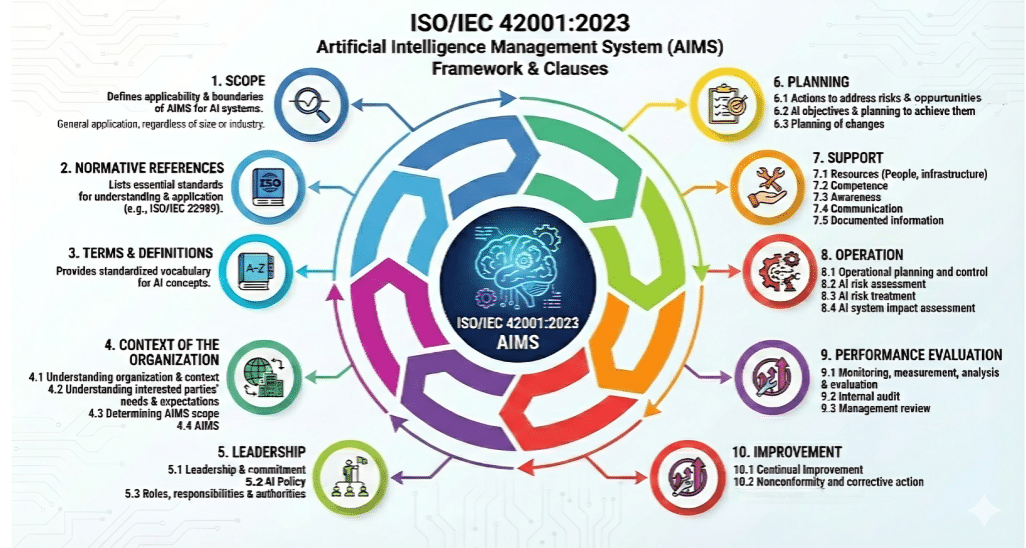

Structure of ISO/IEC 42001 and How to Interpret It

ISO/IEC 42001 follows the harmonised ISO management system structure, making it compatible with ISO/IEC 27001 (information security) and ISO/IEC 27701 (privacy). This enables integrated governance rather than parallel or competing frameworks.

The standard consists of:

- Clauses 1–10: Mandatory requirements

- Annexes A–D: Controls, informative guidance, risk context, and implementation examples

Overview of the Clauses and Annexes

Clauses 1 to 3 establish the foundation for consistent interpretation:

- Scope clarifies that the standard applies across the full AI lifecycle.

- Normative references align the standard structurally with other ISO management system standards.

- Terms and definitions ensure governance decisions are grounded in shared terminology.

Clauses 4 to 6 anchor AI governance within the organisation:

- Clause 4 requires organisations to understand their context, stakeholders, and the scope of their AI management system.

- Clause 5 establishes leadership accountability, including executive commitment, AI policy, and clear assignment of roles.

- Clause 6 introduces risk-based planning, requiring organisations to identify AI risks and plan how they will be addressed.

Clauses 7 to 10 focus on execution, oversight, and improvement:

- Clause 7 ensures governance is supported by adequate resources, competence, awareness, communication, and documentation.

- Clause 8 addresses operational planning and control of AI systems across the lifecycle, including risk treatment and impact assessment activities.

- Clause 9 requires performance evaluation through monitoring, internal audits, and management review.

- Clause 10 focuses on continual improvement and corrective action.

The annexes extend this structure:

- Annex A provides reference AI governance controls across domains such as lifecycle management, data governance, transparency, and third-party relationships.

- Annex B explains the objectives behind those controls, preventing superficial or checkbox-driven implementation.

- Annex C outlines common AI risk sources and impacts, providing a shared risk vocabulary.

- Annex D offers practical examples of application and integration with other management systems.

Core Requirement of ISO/IEC 42001:2023

Organisations must demonstrate six core capabilities as part of an AIMS:

1. Defined Scope and Context

Identify AI applications, affected business processes, and impacted stakeholders. The AIMS must reflect actual AI use and organisational context.

2. Leadership, Ownership, and Accountability

Top management is accountable for AI governance outcomes. Typical ownership structures include:

- Board and executives: Overall accountability and management review

- CISO, Chief Risk Officer, or equivalent: Operational ownership of AIMS

- Legal and compliance teams: Regulatory and ethical oversight

- Product, engineering, and data teams: Operational lifecycle controls

3. Assessments and Planning

AI risks must be identified and assessed across legal and regulatory requirements, ethical and societal impacts, and operational and technical vulnerabilities, along with their potential financial, customer, and reputational consequences.

A structured risk assessment process establishes a disciplined method for identifying, analysing, and monitoring AI-related risks as systems are designed, deployed, modified, and operated.

In addition to this, AI impact assessment extends the analysis beyond technical failure modes to examine potential effects on individuals, groups, and wider societal outcomes. This ensures governance decisions are informed not only by model performance, but also by accountability, fairness, and potential harm.

To operationalise these requirements, gap analysis typically initiates the implementation journey. It evaluates existing policies, controls, and operational practices against ISO/IEC 42001 requirements, identifying where governance structures are insufficient or misaligned.

4. Lifecycle Governance

AI systems must be governed across design, development, deployment, operation, monitoring, change, and retirement. Governance must adapt as models, data, and use contexts evolve.

5. Support and Competence

Organisations must ensure adequate competence, awareness, communication, documentation, and resources to operate the AIMS effectively.

6. Monitoring, Audit, and Continual Improvement

AI governance must be monitored, internally audited, reviewed by management, and continually improved. Identified failures or weaknesses must result in corrective action.

Positioning ISO/IEC 42001 Among Global AI Governance Frameworks

ISO/IEC 42001 does not replace statutory or regulatory obligations such as the EU AI Act or other sector-specific laws; rather, it complements them by providing a structured and certifiable governance architecture. It establishes a lifecycle-wide AI management system with explicit top management accountability, defined roles, and a risk-based oversight model spanning design, development, deployment, and monitoring.

In comparison, ISO/IEC 27001 remains focused on protecting information assets through security-centric risk management and is similarly certifiable, but narrower in scope. Meanwhile, the EU AI Act and Canada’s proposed Artificial Intelligence and Data Act, once enacted, would impose binding legal obligations on high-risk or high-impact AI systems through regulatory enforcement mechanisms. By contrast, the NIST AI Risk Management Framework provides voluntary, policy-driven guidance that supports structured AI risk identification, measurement, and management, and is widely adopted across U.S. public and private sectors.

ISO/IEC 42001 Certification

ISO/IEC 42001 is a certifiable management system standard. Certification is conducted by an accredited third-party certification body and follows the standard ISO management system audit model:

1. Stage 1 Audit (Readiness Review)

The certification body reviews the AIMS design, documented scope, AI policy, risk assessment approach, and preparedness for full assessment. The objective is to confirm that the organisation is ready for Stage 2.

2. Stage 2 Audit (Certification Audit)

The certification body evaluates the effective implementation and operation of the AIMS. This includes evidence of AI governance processes in practice, lifecycle controls, risk treatment, monitoring, internal audit, and management review.

3. Certification Decision

Based on audit findings, the certification body determines whether certification can be granted, subject to closure of any nonconformities.

4. Surveillance Audits and Recertification

Ongoing surveillance audits verify continued conformity and continual improvement. Full recertification is typically required every three years.

Internal audits and management reviews are mandatory requirements of ISO/IEC 42001, but they are not certification stages. They are assessed by the certification body during Stage 2 and surveillance audits.

Benefits of ISO/IEC 42001

Beyond compliance, ISO/IEC 42001 delivers practical organisational benefits:

1. Reduced AI risk exposure through consistent, lifecycle-based oversight rather than fragmented or reactive controls.

2. Clear accountability for AI-driven decisions, reducing ambiguity around ownership, escalation, and responsibility.

3. Scalable governance that supports increasing AI adoption without proportional increases in complexity or manual review effort.

4. Improved trust with regulators, customers, and partners by demonstrating structured AI governance.

5. Efficient integration with existing ISO management systems, avoiding duplication and enabling faster, more consistent decision-making.

Most importantly, the standard prevents AI governance shortfalls from accumulating as systems, use cases, and dependencies grow.

How Anzen Approaches ISO/IEC 42001:2023

The challenge in implementation of ISO/IEC 42001 lies in translating its governance architecture into a management system that operates coherently within the organisation’s existing risk, compliance, and executive oversight environment.

Many organisations approach AIMS as a documentation exercise, drafting AI policies, maintaining risk registers, and mapping controls to clauses. However, such implementations often remain disconnected from realistic operation and

intensify administrative burden.

Our approach begins with actual AI use cases, not templates. We conduct structured gap assessments, AI risk and impact assessments, and certification- readiness reviews aligned with the ISO/IEC 42001 standard. Findings are mapped against relevant frameworks such as ISO/IEC 27001, enterprise risk management, privacy programmes, and obligations under the Digital Personal Data Protection Act to eliminate duplication while reinforcing traceability and control effectiveness.

Whether initiating AIMS implementation or preparing for certification, we deliver structured and auditable AI governance. ISO/IEC 42001 defines the baseline; maturity is demonstrated through disciplined implementation and sustained oversight.

In the next articles, we examine how these global AI governance frameworks compare in practice and how organisations can translate them into effective, operational controls that withstand regulatory and real-world scrutiny.