Across the past three articles in this series, we have explored the foundations of structured AI governance. The first article introduced ISO/IEC 42001:2023, outlining its clauses, annexes, and certification approach. The second compared it with leading frameworks, including ISO/IEC 27001:2022, the EU AI Act, and the NIST AI Risk Management Framework. The third examined the core risk pillars organisations must manage across the AI lifecycle. Each article examined a different aspect of the same underlying issue.

Most ISO/IEC 42001 programmes begin well but lose momentum within the first year, as policies accumulate while operational controls fail to take hold. This article focuses on ISO 42001:2023 implementation challenges, and how organisations can address them.

Why Early Progress in ISO/IEC 42001 Slows Down

Organisations working towards ISO/IEC 42001 certification often have similar patterns. Early progress is strong, with policies drafted, committees formed and plans approved. Within six to twelve months, however, momentum slows. Documentation grows, but practical controls remain limited, risk ownership is unclear, and certification timelines begin to slip. This reflects a structural flaw in how ISO 42001 is implemented.

The issue is that many organisations approach AI governance as a compliance exercise rather than a functioning management system. ISO/IEC 42001 is treated as a checklist, with policies and controls created to satisfy audits, while the underlying structures needed to make them effective are not properly put in place.

As a result, the AIMS may appear robust on paper but does little to reduce the organisation’s actual exposure to AI-related risks.

Eight Critical Challenges in Implementing AI Governance

1. No defined AI asset inventory

Effective AI governance implementation depends on a clear understanding of what AI systems are in use, where they are deployed, the data they rely on, and the decisions they influence. Most organisations do not begin with a structured

inventory.

AI tools are often introduced by individual business units without central oversight. Third-party AI features built into SaaS platforms are rarely recognised as AI systems, even when they affect key decisions.

Without a complete and up to-date inventory, AI risk assessment becomes guesswork. Organisations cannot confidently identify which systems need impact assessments, where the highest risks lie, or how lifecycle controls should be applied. As a result, the scope of the AIMS remains theoretical rather than rooted in actual operations.

2. Fragmented ownership across teams

ISO/IEC 42001 places accountability for AI governance with senior management. In practice, responsibility is often split across legal, IT, data science, product, and compliance teams, with no single group overseeing the entire AI lifecycle.

This creates gaps in governance at critical moments such as model updates, vendor changes, expanded deployment, and incident response. Each team focuses on its own remit, while cross-functional decisions fall between the cracks.

Effective implementation requires clear accountability that cuts across organisational silos, supported by defined escalation paths and decision-making authority.

3. AI impact assessments treated as paperwork

Clause 8 of ISO/IEC 42001 requires organisations to carry out the AI impact assessments. These are meant to evaluate how AI systems affect individuals, groups, and society, not just technical performance or security risks.

In many cases, however, these assessments become a formality. Templates are completed and approved, but they do not meaningfully influence deployment decisions or ongoing controls.

As a result, high-risk systems can be introduced without proper scrutiny, increasing the likelihood of biased outcomes, inappropriate automation of important decisions, and harm to vulnerable groups.

4. Governance that stops at deployment

Many organisations focus on development and deployment but fail to extend controls across the full lifecycle of AI systems. As these systems evolve, models lose accuracy, use cases expand, and regulatory expectations change. Without continuous governance, risk exposure increases over time.

An effective AI management system must include operational monitoring, change management, periodic reassessment, and structured retirement processes.

5. Third-party and vendor AI risk

Organisations are increasingly relying on third-party AI systems, including APIs, SaaS platforms, and externally developed models.

These dependencies are assessed using standard vendor risk processes that do not address AI-specific risks such as model transparency, training data integrity, or bias in outputs. As reliance on large language models and AI agents increases, unmanaged third-party risk becomes a major governance gap.

6. Competence gaps in governance

ISO/IEC 42001 requires that those involved in AI governance have the necessary skills and understanding to carry out their roles. This is often underestimated.

While not everyone needs deep technical expertise, those responsible for oversight must understand AI systems well enough to assess risk and evaluate controls.

7. Integration failures with existing systems

ISO/IEC 42001 is designed to integrate with standards such as ISO/IEC 27001 and ISO/IEC 27701.

In practice, organisations often implement AI governance separately. AI risks are not integrated into existing risk registers, and audit programmes operate in silos. This duplication increases effort while weakening governance outcomes.

8. Leadership engagement gaps

ISO/IEC 42001 places clear accountability for AI governance on senior management. This goes beyond formality. An effective AIMS depends on continued executive involvement in management reviews, policy decisions, resource allocation, and responses to significant AI risks or incidents.

In many organisations, leadership engagement is strong during the initial phase but weakens after implementation. Governance becomes operational rather than strategic, and key decisions proceed without oversight.

Without consistent engagement from leadership, the AIMS shifts into a compliance role rather than functioning as a true governance system, limiting its ability to influence decisions that shape real AI risk.

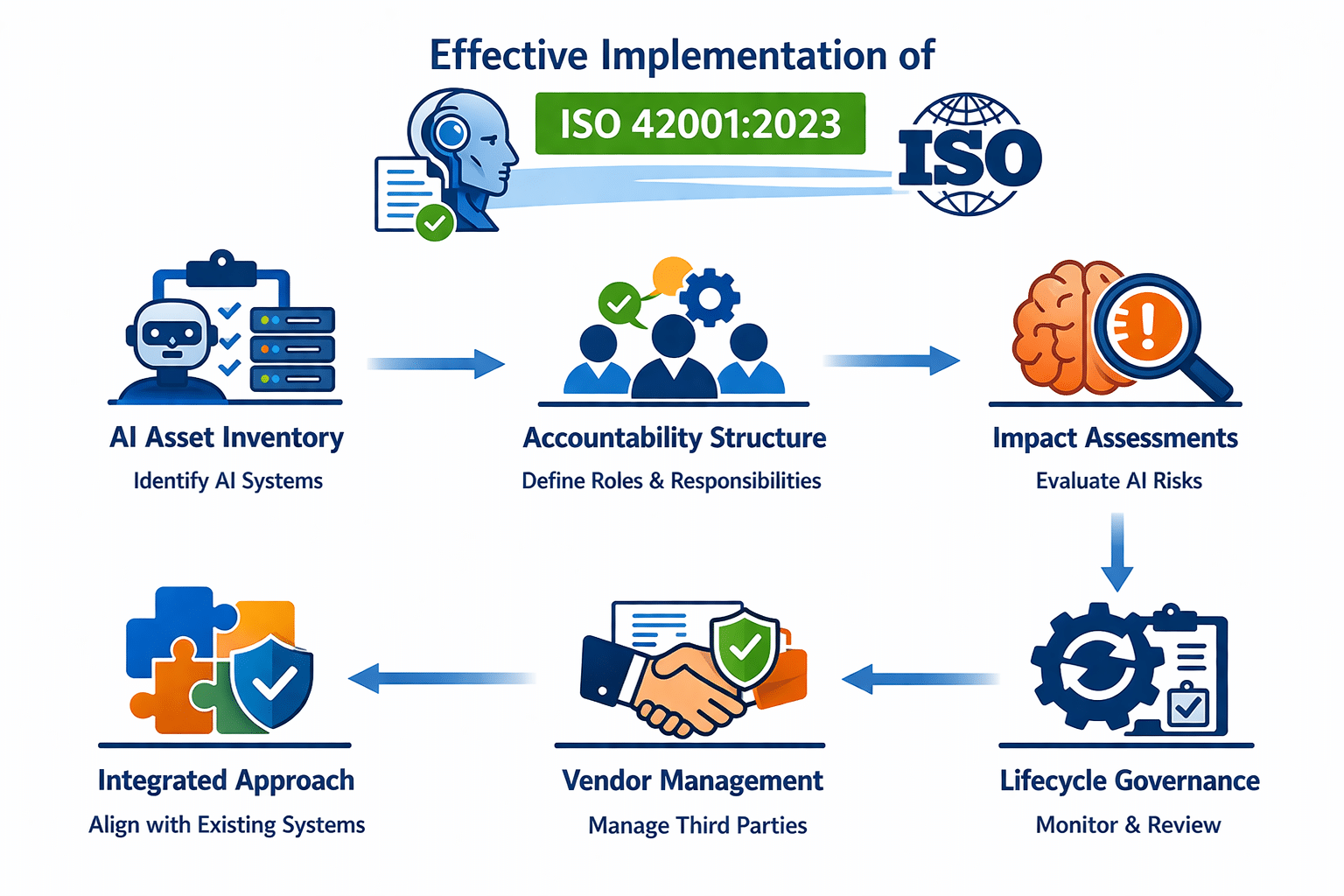

What Effective ISO 42001:2023 Implementation Looks Like

Addressing these roadblocks requires a different implementation approach, one that begins with operational reality rather than clause mapping.

1. Start with the AI asset inventory

Organisations need a clear and accurate understanding of where AI is deployed, in what form, by whom, and with what consequences. This inventory defines the scope of the AI management system and grounds subsequent governance decisions.

2. Design accountability structures before drafting policies

Governance documents are only as effective as the accountability structures behind them. Clear ownership of AI risk management must be established, for impact assessments, lifecycle reviews, and escalation decisions with assigned individuals having both authority and competence.

3. Conduct impact assessments

The AI impact assessment process must engage people with sufficient knowledge of AI system behaviour, affected populations, and organisational context to identify non-obvious risks rather than following a template.

4. Extend governance through the full lifecycle

Operational monitoring, periodic re-assessment triggers, change management processes, and structured retirement procedures must be designed and implemented alongside development-phase controls.

5. Build third-party governance into vendor relationships

AI-specific risk requirements should be incorporated into procurement processes, vendor contracts, and ongoing supplier management. The organisation’s governance obligations do not diminish because a system is built or operated by a third party.

6. Integrate not replicate

Where information security, privacy, or other management systems already exist, AI governance should be integrated into existing risk frameworks, audit programmes, and management structures, not built as a parallel system.

Closing ISO/IEC 42001 Implementation Gaps with Anzen

The challenges outlined above reflect what we consistently observe in gap assessments across organisations at different stages of AIMS implementation, from those just starting with ISO/IEC 42001 to those preparing for certification audits.

At Anzen, our approach is grounded in how organisations operate along with documentation. We begin with structured gap assessment that reviews existing policies, controls, and governance practices against ISO/IEC 42001. This allows us to identify not only what is missing, but also where controls exist in form but do not work effectively in practice.

We develop a phased implementation roadmap aligned to the organisation’s current maturity, prioritising operational controls. Where frameworks such as ISO/IEC 27001 are already in place, we prioritise integration rather than duplication. AI risk assessments are aligned with enterprise risk management, privacy impact assessments are extended to cover AI-specific risks, and audit programmes are designed to review AI governance alongside information security and privacy controls.

For organisations pursuing certification, we conduct ISO/IEC 42001 certification readiness assessments, identifying gaps in documentation, evidence, and audit preparedness.

AI governance is not a one-off project. It is an ongoing discipline that must evolve as systems change, regulations develop and risk profiles shift. Certification is not the end point, but the beginning of a more mature and sustained approach to governance.

ISO/IEC 42001:2023 provides a robust and certifiable framework, but it does not implement itself. Most organisations struggle in the gap between design and day-to-day operation. That is where meaningful AI governance is built.

For organisations ready to address that gap, the starting point is an honest view of where governance stands today, rather than where policies suggest it should be.

Anzen Technologies is a CERT-In empanelled cybersecurity firm offering AI governance, GRC consulting, application security, and incident response services. Contact us to discuss AIMS implementation or certification readiness.